From Bash Scripts to AI CTO

From Bash Scripts to AI CTO. Architecture: The Orchestration Layer. The Self-Build: 8 Days of Autonomous Development.

From Bash Scripts to AI CTO

Prateek Karnal didn't set out to build an AI that could manage software teams. Like most builders, he started with a specific pain point: coordinating multiple AI coding agents without them stepping on each other's work or getting stuck in infinite loops. [7]

The traditional approach—ReAct loops where agents reason, act, and observe in sequence—works fine for single-agent tasks. But scale to multiple agents working on the same codebase, and you hit immediate problems. Agents overwrite each other's changes. They can't handle merge conflicts. When CI fails, there's no clear escalation path. Most critically, they operate in isolation without learning from each other's successes and failures.

Composio's breakthrough was recognizing that multi-agent coordination is fundamentally different from single-agent intelligence. Instead of making individual agents smarter, they built infrastructure for making agent teams more effective. The result is a system that can autonomously fix 84.6% of CI failures across 41 test cases and handle 68% of code review issues without human intervention. [3]

But the real validation came when they turned the system on itself. "The thing being built was the thing managing its own construction," Karnal explains. "We wanted to see if an AI system could not just write code, but manage an entire software development process." [2]

Architecture: The Orchestration Layer

At its core, Agent Orchestrator solves three fundamental problems that break traditional multi-agent systems: isolation, feedback routing, and stuck agent detection. [4]

Isolation happens through Git worktrees—each agent gets its own branch and workspace, eliminating the file conflicts that plague naive multi-agent setups. When Agent A is refactoring the authentication system while Agent B adds new API endpoints, they're working in completely separate environments until merge time.

Feedback routing ensures that CI failures, code review comments, and merge conflicts reach the right agents. Instead of broadcasting every event to every agent (expensive and noisy) or hoping agents will poll for updates (unreliable), the orchestrator maintains a directed graph of which agents care about which events. When a test fails, only the agents responsible for that code path get notified.

Stuck agent detection uses JSONL event tracking to identify when agents stop making progress. Traditional systems rely on timeouts or manual intervention. Agent Orchestrator watches for patterns—an agent making the same API call repeatedly, or generating identical code changes—and automatically escalates or reassigns work.

The plugin architecture makes this practical for real engineering teams. Eight swappable slots handle everything from runtime environments (tmux, Docker, Kubernetes) to agent types (Claude Code, Aider, Codex) to workspace management (worktrees, clones) to issue tracking (GitHub, Linear). [1]

Configuration happens through YAML files that define reactive workflows. When CI fails, retry twice with the same agent, then escalate to a senior agent, then page a human if still failing. When a PR gets review comments, route them to the original author agent first, then to a code review specialist if not resolved in 2 hours.

The Self-Build: 8 Days of Autonomous Development

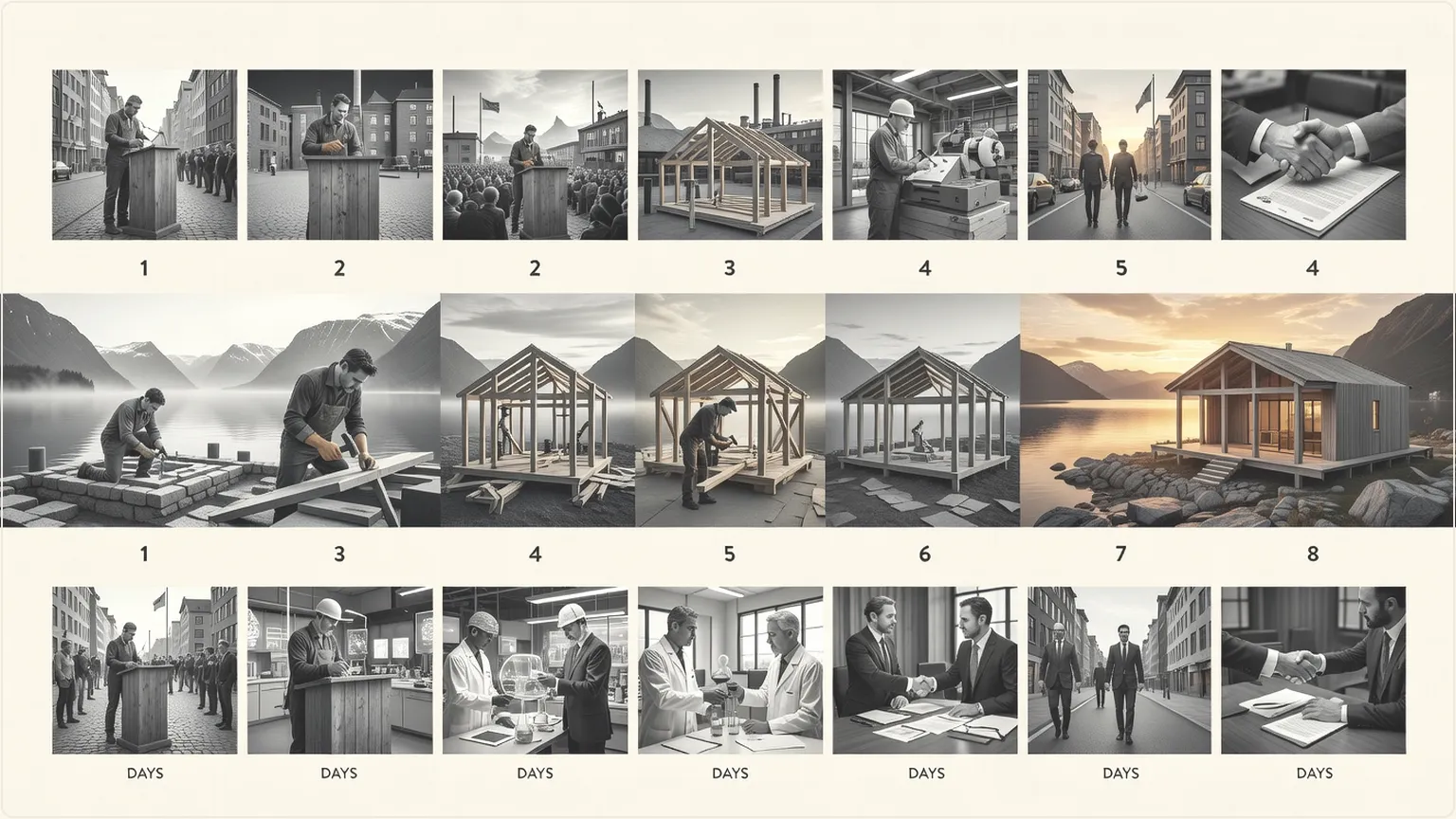

The most compelling demonstration of Agent Orchestrator's capabilities is its own creation story. From February 13-20, 2026, the system built itself with minimal human intervention—a real-world stress test of multi-agent coordination at scale. [2]

The numbers tell the story: 30 concurrent agents at peak, 747 commits, 102 pull requests with 86% created by AI and 65% successfully merged, 700 review comments with 99% handled by AI. Claude Opus 4.6 contributed 512 commits while Sonnet 4.5 added 373. Human effort: approximately 3 focused days of high-level direction and escalation handling.

But the interesting insights come from the failure modes. Merge conflicts initially broke the system until agents learned to coordinate through the orchestrator's conflict resolution workflows. Code review cycles created infinite loops until timeout and escalation logic was added. Test failures cascaded across agents until isolated worktrees and targeted feedback routing contained the damage.

"Orchestration matters more than any individual agent improvement," Karnal reflects. "The question isn't how smart can we make one agent, but how good can a system get at deploying, observing, and improving dozens of agents working in parallel." [2]

The self-build also revealed emergent behaviors that weren't explicitly programmed. Agents began specializing—some focused on frontend components, others on backend services, still others on testing and documentation. They developed informal handoff protocols, with agents leaving detailed commit messages and PR descriptions for their colleagues.

Beyond ReAct: Structured Workflows for Production

Traditional AI agents rely on ReAct loops—reason about the problem, take an action, observe the result, repeat. This works for isolated tasks but breaks down in complex, multi-step workflows where actions have dependencies and side effects. [3]

Agent Orchestrator introduces structured stateful workflows with explicit planner/executor separation. Instead of each agent reasoning about everything from scratch, a central planner maps out the work and assigns specific, bounded tasks to executor agents. This reduces cognitive load on individual agents while maintaining global coherence.

Just-in-time tool routing means agents only get access to the tools they need for their current task. An agent working on frontend styling doesn't need database migration tools. An agent fixing CI failures doesn't need access to the deployment pipeline. This reduces both cost (fewer tokens in context) and risk (fewer ways for agents to cause unintended side effects).

Error recovery branches handle the reality that AI agents fail in unpredictable ways. Instead of hoping agents will gracefully handle every edge case, the orchestrator defines explicit recovery paths. If an agent can't resolve a merge conflict after 3 attempts, escalate to a human. If CI keeps failing on the same test, assign a different agent with fresh context.

The result is observability that actually helps debug multi-agent systems. Traditional setups give you a wall of agent logs with no clear narrative. Agent Orchestrator provides a timeline view showing which agents worked on what, when they handed off work, where they got stuck, and how conflicts were resolved.

The Builder's Guide: From Chaos to Coordination

Getting started with Agent Orchestrator is deliberately simple: ao start <repo> spins up a basic configuration with sensible defaults. But the power comes from customization for your specific development workflows. [4]

Configuration starts with defining your agent roles. Don't just throw generic coding agents at problems. Create specialists: a frontend agent that understands your component library, a backend agent familiar with your API patterns, a testing agent that knows your quality standards, a DevOps agent that understands your deployment pipeline.

Escalation paths are critical for production use. Define clear handoff rules: when does an agent give up and ask for help? How long should an agent work on a problem before escalating? Who gets paged when the system can't make progress? The self-build revealed that most failures happen at boundaries—merge conflicts, integration tests, deployment issues—where clear escalation prevents infinite loops.

Feedback loops need careful tuning. Too much feedback creates noise and confusion. Too little leaves agents working with stale information. The sweet spot is targeted, actionable feedback routed to the specific agents who can act on it. CI failures go to the agents who touched the failing code. Code review comments go to the original authors. Performance regressions go to the optimization specialists.

Human-in-the-loop should be minimal but strategic. Agents handle routine execution. Humans handle architectural decisions, requirement clarification, and complex debugging. The goal isn't to eliminate human judgment but to focus it on the decisions that matter most.

What Changes When AI Builds the Software

Agent Orchestrator points toward a fundamental shift in how software gets built. We're moving from individual productivity tools (Copilot, Cursor) to autonomous development teams where humans provide direction and agents handle execution.

This isn't just about speed—though the productivity gains are significant. It's about changing the nature of software development work. When agents can handle routine coding, testing, and deployment, human developers become architects, product designers, and system integrators. The bottleneck shifts from typing code to making decisions about what to build and how to organize it.

Nordic companies are particularly well-positioned for this transition. The region's emphasis on automation, systematic thinking, and human-centered design aligns perfectly with orchestrated AI development. While Silicon Valley chases the latest model improvements, Nordic builders focus on making AI systems reliable, predictable, and useful for real engineering teams.

The implications extend beyond individual companies. When software development becomes primarily about orchestration rather than implementation, competitive advantages shift. The companies that win won't necessarily have the best individual developers—they'll have the best systems for coordinating AI development teams.

Open source becomes even more critical in this world. Agent Orchestrator's success comes partly from its open architecture—teams can customize, extend, and contribute improvements. Proprietary agent systems become black boxes that can't adapt to specific workflows. Open orchestration platforms become the foundation for entire ecosystems of specialized agents and tools.

The Post-Code Era: Orchestration as the New Programming

We're entering what Up North AI calls the post-code era—not because code disappears, but because writing code becomes a commodity. The scarce resource shifts from implementation to judgment: what to build, how to organize it, when to ship it, how to maintain it.

Agent Orchestrator represents the first production-ready infrastructure for this transition. It commoditizes execution while spotlighting human judgment on architecture, coordination, and escalation. Code is free. Judgment isn't.

The builders who thrive in this environment won't be the fastest coders or the most prolific committers. They'll be the ones who best understand how to orchestrate autonomous systems to achieve human goals. They'll design workflows, define quality standards, and make architectural decisions while agents handle the mechanical work of implementation.

This is the future of software development: human creativity and judgment directing AI execution and optimization. Agent Orchestrator shows us what that future looks like—and it's arriving faster than most people realize.

Sources

- https://github.com/ComposioHQ/agent-orchestrator

- https://composio.dev/blog/the-self-improving-ai-system-that-built-itself

- https://www.marktechpost.com/2026/02/23/composio-open-sources-agent-orchestrator-to-help-ai-developers-build-scalable-multi-agent-workflows-beyond-the-traditional-react-loops

- https://mintlify.com/ComposioHQ/agent-orchestrator/introduction

- https://composio.dev/

- https://www.reddit.com/r/machinelearningnews/comments/1rd8cfk/composio_open_sources_agent_orchestrator_to_help

- https://pkarnal.com/blog/open-sourcing-agent-orchestrator

Want to go deeper?

We explore the frontier of AI-built software by actually building it. See what we're working on.